The proxy server allows you to set random IP addresses of cloud machines to hide the server IP while scraping data from websites anonymously. Because sending thousands of requests from the same IP address may result in 4xx errors or be temporarily blocked, if the website has a rate-limit.

So, to full-proof the Agenty web scraper from getting blocked, blacklisted or misled by the target websites for scalable web scraping for businesses. We have the smart rotating proxies pool to automatically refresh the IP address every 30 seconds with full control to customize what proxy, country, city and when the auto-rotation should happen in your web scraping agent. Which allow data scraping professionals to succeed in 99.9% of their websites scraping projects running on Agenty cloud.

Scraping proxies

Agenty offers static, residential and Geo-based proxy servers available on different plans. You may use these servers for anonymous web scraping with auto-rotating IP address every 30 seconds to prevent getting blocked while scraping sensitive websites.

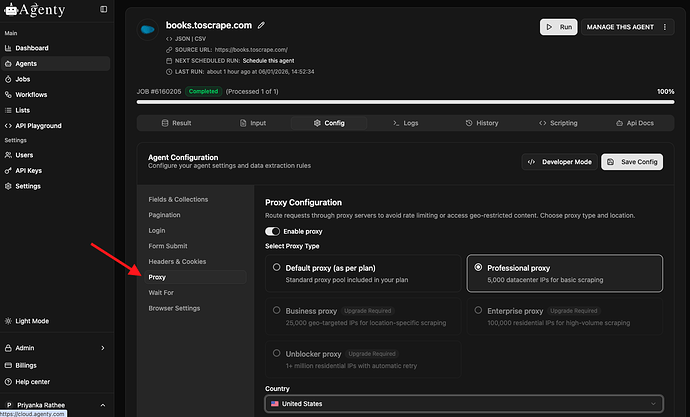

- Go to your agent page, click on the config tab to change the proxy configuration

- Scroll down to proxy settings and use the Select a proxy options to select a proxy server as per your plan

- The country setting allow you to tell what country IP address should be used for particular website scraping.

This will auto-rotate the IP address automatically every minute, or for every few request to bypass the rate limitation.

The number of IPs, proxy type depends on your pricing plan, we recommend the Business and Enterprise plan for high frequency web scraping and data collections.

| Plan | Static Proxy (Pro Plan) | Residential Proxy (Business Plan) | Residential Proxy (Enterprise Plan) |

|---|---|---|---|

| Location | Not specified | US, UK | Global |

| Number of IPs | Limited | Limited | High |

| Use Case | General | Basic web scraping | Enterprise level web scraping |

Static Proxy

The static proxy is data center proxies and when you select these types of proxy in your scraping agent. The web page crawling request is routed through one of the data centers in available regions. Agenty provides the static proxy with up to 5,000 static IPs in over 40+ countries like United States (US), United Kingdom (UK), Canada, Australia, Japan, Germany, France, India, Singapore and more…

Residential Proxy

Residential proxies are an IP address given by an Internet Service Provider (ISP) to some individual (also called as home user). So, each residential proxy address has a physical area, latitude, longitude which makes it a real personal computer IP address.

It’s nearly impossible to detect or block such proxies and they come with very high anonymity for web scraping, which results in great success in your enterprise level data scraping projects.

Geo-based Proxy

The Geo-based proxies allow you to route your web crawling request to go from a selected country or city. Because, in case you might want to extract the store level pricing or promotion from an ecommerce website which has stores in 100+ zip codes and the Geolocation is automatically selected depending on your IP’s geolocation.

That means the website has different product pricing for each city and country. And, at that point you will need to use a Geo-based proxy in your web scraping agent to scrape the local prices and content from that website.

The proxies are maintained by Agenty team and you have select-only access to choose which proxy should be selected in your web scraping agent to scrape data from websites anonymously.